Turbo-Charging Your Stack Without Breaking It — Part 1

To make the system faster there need to be a careful design choice that have to made. Nowadays there are highly scalable systems serves millions and billions of requests by optimizing for speed. The decision made during the implementation phase of the components helps them to achieve the scale that they are performing the best in todays world.

In this article, we are going to see some of the key components of distributed systems and how the industry is steering to make the solution faster.

Components

Protocol

QUIC(Quick UDP Internet Connection)protocol is the modern secure protocol to replace TCP which operates over UDP. Its the latest solution used by big companies to optimize mobile network, streaming , gaming and other application which requires fast and secure reliable communication.

It uses HTTP/3 multiplexed connection between two end points using UDP. TLS 1.3 is integrated by default, encrypting the entire packet during transmission. It allows multiple connection to be run on a single UDP socket. It supports both unidirectional bidirectional stream and the limits can be configured.

What makes it fast?

- Combining the transport and cryptographic handshake allowing Zero round trip time (0-RTT)

- Uses UDP to avoid TCP rigidity enabling better congestion control along with packet loss recovery.

- Avoids Head of the line blocking by using multiple independent streams within one connection so the packet loss in one stream do not block others

- Session remains active when switching networks by using 64 bit connection ID compared to using ID/port.

Compression

For a faster data transfer over network with low bandwidth and faster page loads we need efficient compressions for better user experience.

Lz4 is the fastest losless compression for low latency, high throughput cases where CPU usages is minimal. It is significantly faster providing 500 MB/s compression and 1 GB/s decompression. Its mainly used for high-throughput networks and real time streaming. It encodes repeated sequence of data.

Zstd is fast but slower than Lz4 used for high compression with reasonable speed. It’s often a replacement for DEFLATE/gzip compression with faster speed and decompression. Its used for data archiving, backup solutions , database storages.

Brotli is used for static content compression succeeding gzip. It reduces file size enhancing web performance and bandwidth usage.

Domain Naming Service (DNS) and Content Delivery Network (CDN)

Google DNS(8.8.8.8)and Cloudflare(1.1.1.1) are the fastest among the others because of its massive infrastructure and heavily cached addresses serving directly from memory avoiding any downstream queries to subsequent DNS servers. Cloudflare offers both DNS as well as CDN support. Other CDN’s like Akamai and Fastly are comparably fast targeting different target audiences. Some common optimization across all products are lister below

What makes it fast?

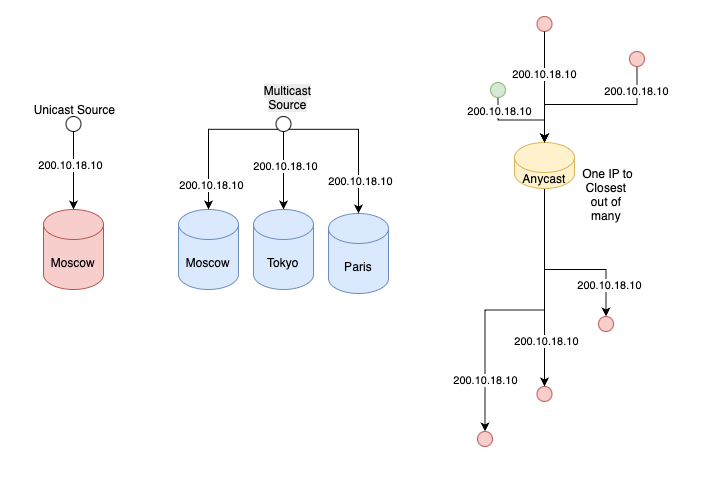

- Usage of Global Any-cast to locate optimized path ensuring user requests goes to the closest data center, reducing the physical distance data must travel.

- Edge Computing support to allow code to run on the edge for faster execution without any central server

- Content Caching by storing static content like images, CSS and Javascript to store at the edge and load instantly without accessing the server

- Argo Smart Routing is used for less hops, closest and least congested path to minimize network latency compared to direct routing by BGP.

- Instant purging for caches is lightning fast compared to other CDN’s taking 15 minutes to 24 hours.

- Time To First Byte (TTFB) is 0.6s in Fastly compared to 1.6 to 2.7s for others

- Auto minify is the option to minimize the content length of javascript and css with shorter variable names helping in reducing the traffic over network and compression

- Early hints., Pre fetching, Adaptive Acceleration, Predictive preloading, Dynamic Optimization are some of the techniques used by Fastly to make the content delivery faster.

Programming Language

We all know that C, C++ are very well suited for CPU bound, low level memory constraint applications to get the full performance of the hardware resources. But to build a highly concurrent, fault tolerant and low latency distributed system the choice of using the C or C++ does not give the expected results. The two language which is super performant for highly concurrent systems are Erlang and Elixir. WhatsApp, Discord and RabbitMQ are fast because of the same reason. Both language compiles to BEAM virtual machine which is highly optimized runtime supporting high concurrency, low latency and efficient I/O operations. The emulator and the some of the runtime systems are written in C for efficiency. They are used in Real time streaming like multiplayer games, chat application, IoT devices, financials trading systems, real time analytics telecommunications etc.

How they are optimized?

- Lightweight process within the BEAM VM allowing to run allowing to run thousands or millions with minimal overhead and not using OS threads. The VM time slices the processes meaning no single process can block the entire system.

- Each process is a isolated unit with its own private memory heap and mailbox. Process communicates using fast asynchronous message passing avoiding CPU idle time.

- The GC happens per process avoiding long pauses and follows a shared nothing architecture avoiding any need for locks, mutexes or any other synchronization overheads.

- Immutable data simplified concurrency, Garbage collection using binary reference and Erlang Term Storage (ETS) for in memory storage. Its based on key value, concurrent access and efficient data retrieval.

- BEAM uses their own runtime to schedule the process across CPU cores making it superior for parallel tasks across multiple core machines.

- Usage of non-blocking I/O will switch between tasks without waiting on I/O allowing the CPU to pick up the tasks immediately.

- The processes are linked and monitored by supervisors enabling them to restart the process in case of failure supporting fault tolerance.

- JIT compiler usage boosts the language by converting the Erlang code to native code for optimized performance.

in the next section we will see much more common optimization techniques used across different components like Load Balancing, API gateway, Message Queues, Caches etc.

Turbo-Charging Your Stack Without Breaking It — Part 1 was originally published in Javarevisited on Medium, where people are continuing the conversation by highlighting and responding to this story.

This post first appeared on Read More