What Did Mount Everest Look Like 50 Million Years Ago? I Built an AI to Show You

Building Trail Narrator for the Gemini Live Agent Challenge

This article was created for the purposes of entering the Gemini Live Agent Challenge hackathon. #GeminiLiveAgentChallenge

Every national park trail has a story stretching back millions of years — layered in rock, carved by glaciers, shaped by eruptions. But most hikers walk right past it.

I wanted to change that. What if a single trail photo could unlock the deep geological story of any landscape? What if you could see what the Grand Canyon looked like when it was a shallow tropical sea 270 million years ago?

That’s Trail Narrator — an AI park ranger that transforms ordinary hiking photos into immersive, narrated time-travel experiences.

Trail Narrator – Your AI Park Ranger

The Idea

The Creative Storyteller track of the Gemini Live Agent Challenge asked for agents that weave together text, images, and audio in a single cohesive flow. I saw an opportunity to combine three of Gemini’s most

powerful capabilities:

1. Google Search grounding — for factually verified location identification

2. Interleaved text + image generation — for time-travel visualizations

3. Text-to-speech — for warm, campfire-style narration

The result is an agent with a distinct persona — “Ranger” — who doesn’t just list geological facts, but tells stories. Think David Attenborough meets a campfire park ranger talk.

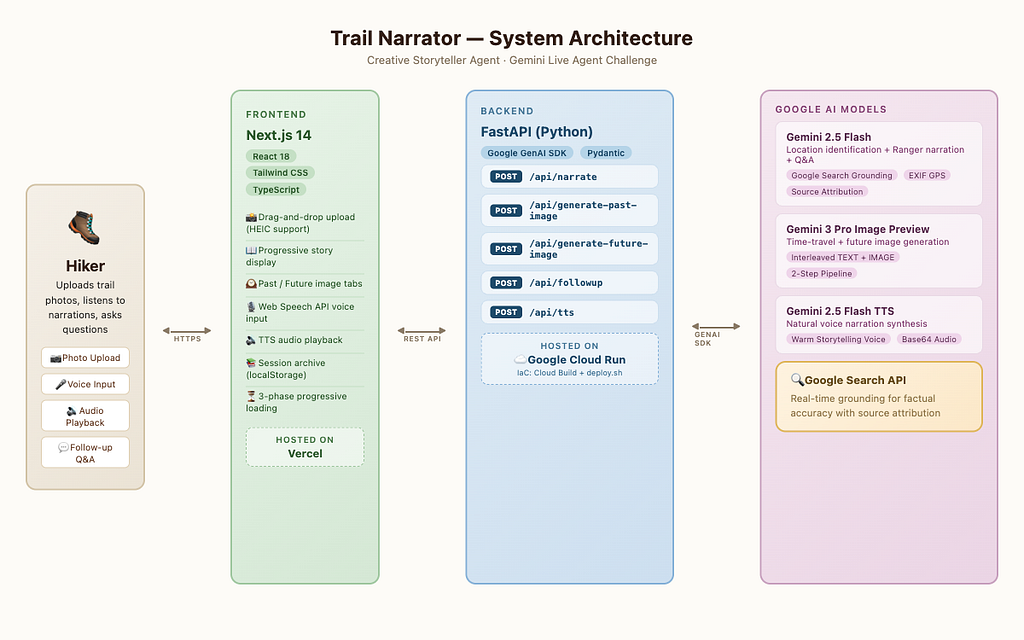

Architecture

The system has three layers:

– Frontend — Next.js 14 on Vercel, handling photo uploads, progressive story display, voice I/O, and a public story gallery

– Backend — FastAPI on Google Cloud Run, orchestrating all AI calls and managing sessions

– AI Layer — Three Gemini models working in concert

The Three-Model Pipeline

Each uploaded photo flows through a pipeline that uses different Gemini models for what they do best:

Model 1: Gemini 2.5 Flash + Google Search Grounding

This is where factual accuracy matters most. When a user uploads a trail photo, Gemini 2.5 Flash identifies the location, geological formations, rock types, flora, and fauna — all verified against Google Search results.

This grounding step is critical. Without it, the model might confidently misidentify a sandstone formation as granite. With Search grounding, every identification is backed by real sources.

The same model then writes the narration — but with a carefully crafted persona prompt that produces campfire storytelling, not Wikipedia summaries:

“270 million years ago, right where you’re standing was the floor of a shallow tropical sea. Creatures with spiral shells and armored fish swam above limestone that would one day form these towering canyon walls…”

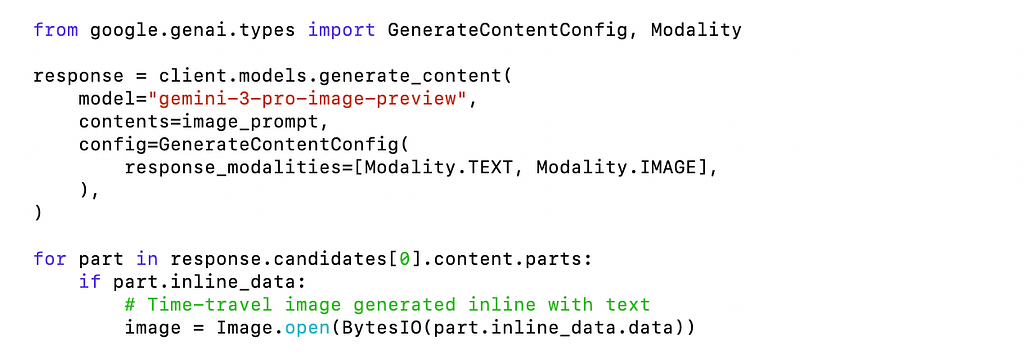

Model 2: Gemini 3 Pro Image Preview — Interleaved Output

This is the magic of the Creative Storyteller track. Gemini 3 Pro can generate images inline with text, using interleaved TEXT + IMAGE output modality.

I use a two-step pipeline:

1. Research step — determine the most dramatic geological era for this specific location

2. Generation step — create a photorealistic visualization of that era

The same pipeline also generates future projections — scientifically grounded visualizations of how climate change and geological processes will reshape the landscape.

Model 3: Gemini 2.5 Flash TTS

Ranger’s stories deserve to be heard, not just read. Gemini TTS converts narrations into natural speech with a warm, engaging storytelling voice:

The UX Challenge: Progressive Loading

Here’s the problem — narration takes ~15 seconds, but image generation takes 30–60 seconds. Making users stare at a spinner for a full minute is a terrible experience.

The solution: three-phase progressive loading.

Phase 1 (~15s): Narration text appears immediately

Phase 2 (~30s): Past image generates in background → toast notification

Phase 3 (~45s): Future image generates in background → toast notification

Users start reading Ranger’s story within seconds. Images fade in later with gentle notifications. By the time they finish reading, the time-travel imagery is ready.

This pattern — show what you have, load the rest in the background — made the difference between a frustrating demo and an engaging one.

Google Cloud Deployment

The backend runs on Google Cloud Run with automated deployment via Cloud Build:

Cloud Run’s auto-scaling (0–10 instances) means I only pay for what I use, and cold starts are fast enough for a demo.

Infrastructure-as-code lives in infra/deploy.sh — a single script that enables APIs, builds the container, deploys to Cloud Run, and outputs the service URL.

What I Learned

Google Search grounding is a game-changer. It transforms Gemini from a creative writer that occasionally hallucinates into a reliable, source-backed narrator. For any application where factual accuracy matters, grounding should be the default.

Interleaved output unlocks creative applications. Generating images inline with text — rather than as a separate API call — means the model understands the full context. The time-travel images aren’t generic; they’re specific to the exact geological era and location described in the narration.

Persona prompting matters more than you’d expect. The difference between “describe the geology” and “tell me a campfire story about the geology” is dramatic. Ranger’s warm, narrative voice makes the same facts 10x more engaging.

Progressive disclosure is essential for AI-heavy UX. When your pipeline takes 60 seconds end-to-end, the architecture of when you show what to the user matters as much as the AI itself.

Public Story Gallery

One feature I’m particularly proud of: every narration is automatically published to a public story gallery on the landing page. Other visitors can browse through explorations from hikers around the world — reading narrations, viewing time-travel images, and learning about geology. It turns Trail Narrator from a single-user tool into an educational platform.

Try It Yourself

- Live Demo: https://trailnarrator.com

- Devpost Link: https://devpost.com/software/trail-narrator

Upload a trail photo — any landscape works. Ranger is waiting to tell you its story.

Built with Gemini AI for the Gemini Live Agent Challenge.

#GeminiLiveAgentChallenge

What Did Mount Everest Look Like 50 Million Years Ago? I Built an AI to Show You was originally published in Javarevisited on Medium, where people are continuing the conversation by highlighting and responding to this story.

This post first appeared on Read More