You’re still designing for an architecture that no longer exists

Claude just showed us what replaced it.

Last Tuesday, I asked Claude to prepare a competitive analysis. Not in a chat window. Not through a prompt. I opened Cowork, pointed it to a folder on my desktop, and said what I needed. It read my files. It cross-referenced data from Slack through a connector. It pulled calendar context. It produced a document — formatted, structured, sourced — and saved it to my working folder. I didn’t open a single application. I didn’t navigate a single menu. I didn’t click through a single interface.

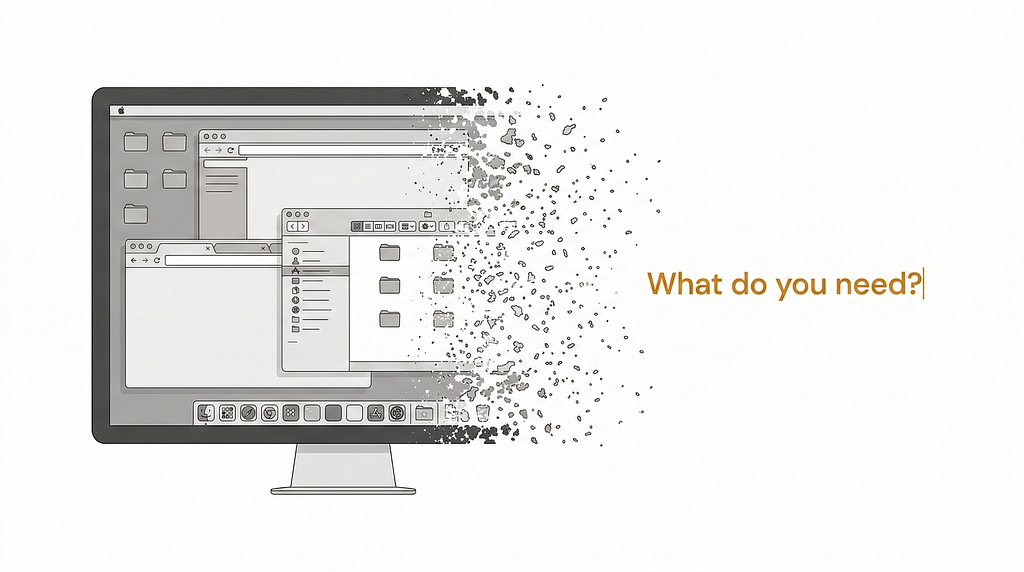

I sat there for a moment, staring at the screen. Not because something had gone wrong — but because nothing looked familiar. The windows were gone. The menus were gone. The entire choreography of opening, navigating, operating, saving, closing, and opening the next thing — the choreography I’d been performing for twenty years — had simply… disappeared.

And that’s when I realized: I wasn’t using a tool. I was working inside a different environment. One that nobody had bothered to name yet.

We keep asking the wrong question. We keep asking how good is the assistant — how well it writes, codes, summarizes, reasons. But that question belongs to the old architecture. What’s actually happening is bigger: the environment itself is changing. The space where we work — the one we’ve inhabited for four decades, the one built on windows and menus and folders and clicks — is being replaced by something structurally different. And if you’re still designing screens, flows, and navigation systems, you might be perfecting the blueprint of a building that’s already been demolished.

Forty years of the same interface

In 1984, Apple introduced the Macintosh and, with it, a way of working that would define every professional environment for the next four decades. The graphical user interface gave us windows, icons, menus, and a pointer — the WIMP paradigm. It was revolutionary. And then it froze.

Think about what changed between 1984 and today. Processing power grew exponentially. Storage went from kilobytes to terabytes. Networks connected billions of devices. Screens went from bulky CRTs to panels in our pockets. But the interaction logic — the fundamental way we relate to our work environment — remained essentially the same. You open an application. You navigate to what you need. You operate it manually, step by step. You save. You close. You open the next one.

The internet didn’t change this. It added connectivity, but you still navigated — now through links instead of folders. Mobile didn’t change it either. It made the environment portable, but you still tapped through apps, scrolled through feeds, clicked through menus. Even cloud computing — which transformed infrastructure — left the interaction surface largely untouched. You were still the operator. The system still waited for your commands.

As Satya Nadella put it: “Thirty years of change is being compressed into three years.” But what’s being compressed isn’t just speed or capability. It’s the architecture of the environment itself.

For four decades, the working environment asked you how. How do you want to format this? Which menu holds the function you need? What’s the right sequence of clicks to get from here to there? The entire interface was a map, and your job was to navigate it.

That map is disappearing. And what’s replacing it isn’t a better map — it’s a fundamentally different kind of space.

What Claude is actually showing us

Let me describe what working with Claude looks like today — not theoretically, but practically. Because the shift becomes obvious once you stop thinking about features and start paying attention to the experience.

Cowork reads files on your desktop, modifies documents, creates deliverables, and operates within your working folder — asking for confirmation before significant actions, working autonomously within defined boundaries. It launched in January 2026 and, within weeks, triggered a $285 billion selloff in software stocks. Not because of what it does, but because of what it replaces: the need to open applications at all.

Claude Code doesn’t assist developers — it is the development environment. Engineers describe entire systems in natural language, and Code builds them: writing files, running tests, submitting pull requests, spawning parallel sub-agents for different tasks. It hit $1 billion in run-rate revenue within six months of general availability. Spotify reports that roughly half of all their updates now flow through AI-generated code, with a 90% reduction in engineering time for large-scale migrations.

Claude in Chrome operates your browser — managing calendars, drafting emails, filling forms, extracting data — maintaining context across sessions.

Claude in Excel reads complex multi-tab workbooks, builds pivot tables, pulls live market data through connectors from S&P Global, Moody’s, and FactSet.

Memory doesn’t just store preferences. It maintains hierarchical context — organization-wide policies, project-level standards, individual preferences — and recovers the full state of your working environment in seconds. As one developer described it: “Treat it as system state. The file becomes the source of truth.”

And underneath all of this, MCP — the Model Context Protocol — connects Claude to your entire technology stack: Google Drive, Slack, GitHub, Gmail, Figma, Notion, Salesforce, and thousands more. With 97 million monthly SDK downloads and adoption by OpenAI, Google, and Microsoft, MCP has been donated to the Linux Foundation as an open standard — what Thoughtworks described as one of the fastest standards convergence cycles in recent tech history.

Now step back and look at what I just described. Not a chat interface with added capabilities. A working environment — one where the system reads your context, understands your purpose, operates across your tools, and delivers results while you focus on what actually matters.

Nick Turley, OpenAI’s Head of Product, said it plainly: “We never meant to build a chatbot; we meant to build a super assistant, and we got a little sidetracked.”

Everyone got sidetracked. The text box made us think we were talking to a tool. We were actually sitting inside the first draft of a new environment.

The three variables of a new architecture

If this is a new environment and not just a better tool, it should be structurally different — not incrementally improved. And it is. But to see the structure, you need to stop looking at capabilities and start looking at variables. What coordinates define this space that didn’t exist in the previous one?

I’ve spent the last two years studying this question, and what I’ve found is that three variables consistently distinguish this new architecture from everything that came before it.

Intention. In the traditional working environment, you tell the system how to do things. You navigate menus, select options, sequence operations. The system doesn’t know what you want — it knows what you clicked. In the new environment, you express what you want to achieve. The system interprets your purpose, weighs context, and determines the path. Claude doesn’t execute commands; it interprets goals. MCP doesn’t connect tools for the sake of integration; it connects them at the service of what you’re trying to accomplish. This is the shift from procedural thinking to intentional thinking — from operating a machine to having a conversation with a collaborator who understands purpose.

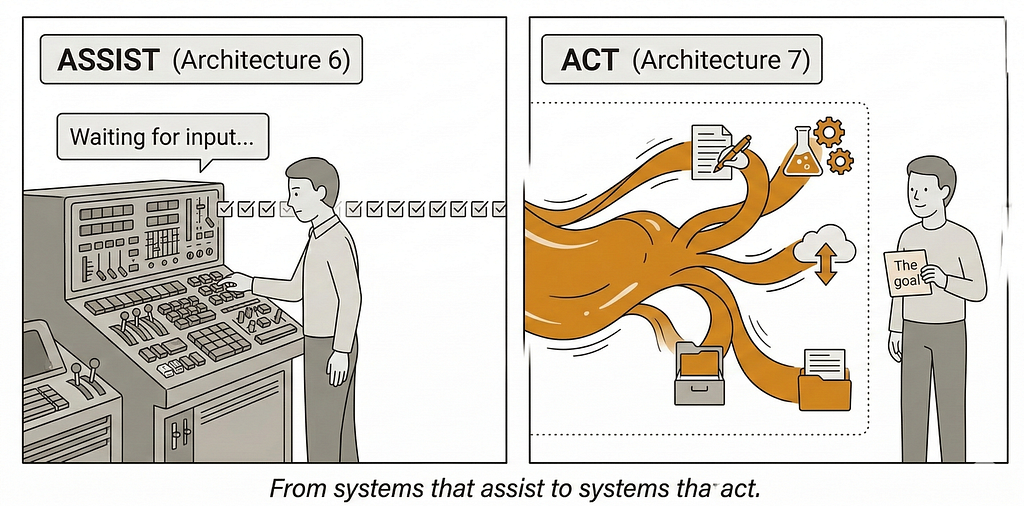

Autonomy. In the traditional environment, systems assist. They wait for your next instruction. Every action requires a human operator pressing a button, selecting an option, confirming a step. In the new environment, systems act. Claude Code doesn’t wait for you to dictate each line of code — it plans, executes, tests, iterates, and spawns sub-agents to work in parallel. Cowork doesn’t need step-by-step guidance — it works toward outcomes within boundaries you define. This is not automation in the industrial sense, where machines repeat predefined sequences. This is agency: the capacity to pursue goals through autonomous decision-making while maintaining human oversight.

Adaptation. In the traditional environment, systems remain fixed. Your software behaves the same way on day one and day one thousand. If you want it to change, you configure it manually — or wait for the next version. In the new environment, systems evolve. Claude’s memory learns your preferences, your team’s standards, your organization’s policies. It gets better at understanding you over time. The interaction isn’t static — it’s alive. What was once a tool that needed to be configured becomes an environment that learns to fit.

These three variables — intention, autonomy, and adaptation — don’t operate in isolation. They are unified by a fourth principle: orchestration. MCP is the clearest manifestation of this. It’s the connective tissue that allows intentions to flow across tools, autonomous actions to coordinate across systems, and adaptive learning to compound across interactions. As Microsoft’s CTO Kevin Scott observed at Build 2025: “MCP is filling such an unbelievably big need in the ecosystem… it’s really kind of breathtaking.” Atlassian’s CTO Rajeev Rajan called it “the gold standard for how LLMs interact with tools.”

But orchestration isn’t just a protocol. It’s the architectural principle that turns three independent variables into a coherent environment. It’s what makes the difference between a collection of smart features and a fundamentally new working space.

Here’s the critical point: these aren’t features of Claude. They are coordinates of a new architecture. Any system that embodies intention, autonomy, adaptation, and orchestration is operating in this new space — regardless of which company built it. Claude happens to be the most complete manifestation today. But the architecture is bigger than any single product.

Everyone is describing the same thing

What makes this moment historically significant is that the people building these systems are arriving at the same conclusion from entirely different directions — and most of them don’t realize they’re describing the same thing.

Goldman Sachs’ CIO Marco Argenti writes: “Rather than functioning as one-dimensional applications, AI models are becoming operating systems that independently access tools in order to perform tasks.” Sam Altman told Sequoia Capital: “People in college use it as an operating system.” Mustafa Suleyman, CEO of Microsoft AI, frames it as: “This is going to be the next platform of computing.” Jensen Huang and Siemens announced a partnership to build what they literally called “the Industrial AI Operating System.”

From the design world, the language is different but the observation is the same. John Maeda describes the shift from UX to AX — Agentic Experience — where “designers become orchestrators of experiences rather than crafters of interfaces.” Rachel Kobetz, PayPal’s Chief Design Officer, argues that “the real work of design is orchestrating how intelligence behaves.” Jakob Nielsen, in his 2026 predictions, charts what he calls “a fundamental shift from Conversational UI to Delegative UI.” And Jenny Wen, who leads design for Claude at Anthropic, put it bluntly on Lenny’s Podcast: “This design process that designers have been taught, we sort of treat it as gospel. That’s basically dead.”

Orchestrators. Intelligence that behaves. Delegative rather than conversational. The design process itself declared dead — by the person designing the system that killed it. Everyone is circling the same structural transformation.

I should be honest about where this breaks. Jared Spool makes a fair point: “The world romanticizes AI as an all-powerful, game-changing technology when, in reality, it barely works. But it demos well.” He’s not wrong — intention interpretation still fails, autonomy can produce confidently wrong results, and adaptation has boundaries that aren’t always transparent. The gap between what these systems promise and what they reliably deliver is real, and anyone designing for this architecture needs to take that gap seriously. But the existence of a gap doesn’t invalidate the architecture. The early web had broken links, crashed browsers, and took minutes to load a single page. The architecture was still real. The question was always whether the structural logic would hold as the technology matured. I believe this one will — not because every interaction works today, but because the variables are right.

This is not a product. This is an architecture.

There is a pattern that most people in technology miss because it operates on timescales longer than product cycles.

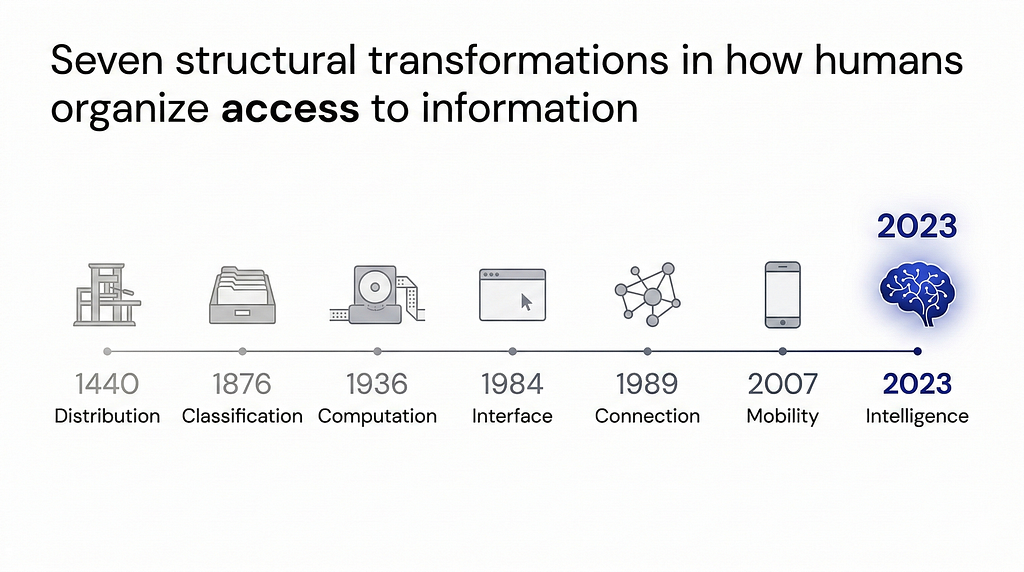

In 1440, the printing press didn’t improve manuscripts — it created a new architecture for distributing knowledge. In 1876, the Dewey Decimal System didn’t improve bookshelves — it created a new architecture for classifying information. In 1936, the Turing machine didn’t improve calculators — it created a new architecture for computing. In 1984, the graphical interface didn’t improve command lines — it created a new architecture for human-computer interaction. In 1989, the World Wide Web didn’t improve networks — it created a new architecture for connecting information. In 2007, the smartphone didn’t improve phones — it created a new architecture for mobile access.

Each of these was a structural transformation in how humans organize and access information. Not a better version of what came before, but a fundamentally new set of coordinates — new variables, new paradigms, new possibilities that simply didn’t exist in the previous architecture.

What we are witnessing now is the seventh such transformation. The architecture of Intelligence. A structure defined not by windows and clicks and navigation, but by intention, autonomy, adaptation, and orchestration.

I call this Architecture 7 — the seventh structural transformation in how humans organize access to information.

In The Intelligence Architect, I mapped each of these seven transformations in detail: the variables that define them, the design principles that emerge from each shift, and the practical frameworks for building within a new architecture. But you don’t need the book to see what’s happening. You just need to pay attention to what Claude is showing us right now: we are not living through an AI upgrade. We are living through an architectural shift.

And architectural shifts don’t ask for permission. They don’t arrive with a press release explaining what changed. They arrive as a quiet realization that the environment you’ve been working in — the one that felt permanent, the one built on windows and menus and forty years of muscle memory — has already been replaced by something you can’t yet name but can already feel.

Claude is the first Architecture 7 environment built for people who work with information. It won’t be the last. The same structural principles — intention replacing navigation, autonomy replacing manual operation, adaptation replacing static configuration — will reshape environments for entertainment, communication, education, and every domain where humans interact with complex systems. The evidence — from $1 billion run-rates to $285 billion selloffs to 97 million monthly SDK downloads — makes that trajectory unmistakable.

What this means for designers, practically

If the architecture has changed, so has the work. Designing for intention means your wireframes become something closer to operating manuals — not layouts of screens, but descriptions of outcomes the system should pursue. Designing for autonomy means defining boundaries instead of workflows: what the system can do on its own, where it must pause for confirmation, how it recovers when it gets things wrong. Designing for adaptation means building feedback loops rather than preference panels — mechanisms through which the environment learns from each interaction rather than waiting to be manually configured. And designing for orchestration means mapping how intelligence flows across tools, not how users navigate between them. The screen is no longer the unit of design. The intent is.

You are already inside it

If you used Claude this week — or ChatGPT, or Copilot, or any AI system that understood your intent, acted autonomously, and adapted to your context — you didn’t use a chatbot. You worked inside a new environment. You just didn’t have the language for it yet.

The language matters. Because when you call it “a chatbot,” you design chat interfaces. When you call it “an assistant,” you design helper features. When you call it “a tool,” you design toolbars. But when you recognize it as a new architecture — with its own variables, its own structural principles — you design differently. You stop asking “where should the button go?” and start asking “how does the system interpret intention?” You stop designing navigation and start designing orchestration. You stop building static configurations and start building adaptive environments.

The forty-year environment built on windows, menus, and manual navigation is giving way to one built on intention, autonomy, adaptation, and orchestration. Every major voice in technology and design is converging on this observation from different angles. The question is no longer whether the shift is happening.

The question is whether you’ll design for the architecture that’s arriving — or keep drawing menus for the one that already left.

Adrian Levy is a UX architect and information architecture researcher. His previous work for UX Collective includes “What Perplexity’s AI browser reveals about the future of UX” and “The eleven commandments of AI UX.” He is the author of The Intelligence Architect, which maps the seven structural transformations in how humans organize access to information — from the printing press to the age of intelligence.

You’re still designing for an architecture that no longer exists was originally published in UX Collective on Medium, where people are continuing the conversation by highlighting and responding to this story.

This post first appeared on Read More